News · Apple

Vision Pro's Next Leap: Enhanced Accessibility with On-Device AI

Apple is set to redefine accessibility on the Vision Pro, introducing advanced features leveraging on-device AI for users with low vision. These updates promise to magnify real-world views and offer 'Live Recognition' for environmental interpretation.

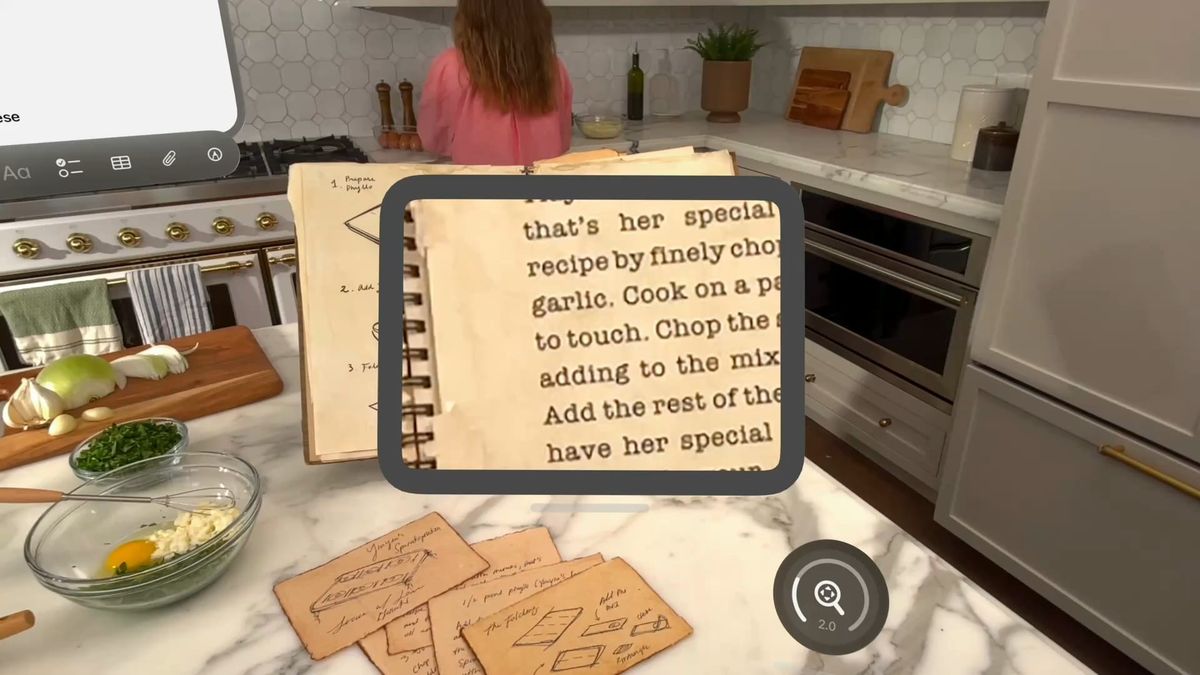

Apple Vision Pro user magnifying a real-world object through passthrough lenses, illustrating new accessibility features.

Apple announced on May 13, 2025, that its Vision Pro headset will soon receive significant accessibility enhancements, particularly for users with low vision. These forthcoming features will integrate advanced on-device artificial intelligence to magnify real-world passthrough views and intelligently describe surroundings.

UploadVR reports that these new accessibility functions are slated to arrive in a visionOS update later this year. The outlet suggests that based on Apple's previous patterns of announcing accessibility features a month before WWDC, these capabilities might be unveiled as part of visionOS 3 at WWDC25 next month.

Key among the updates is an improved 'Zoom' accessibility feature, which, according to UploadVR, will extend its magnification capabilities from virtual content to also encompass the real world visible through the passthrough cameras. Additionally, a new 'Live Recognition' feature will expand on VoiceOver's existing screen-reader functionality, utilizing on-device machine learning to 'describe surroundings, find objects, read documents, and more' in the user's passthrough view.

Video: Apple on YouTube

The outlet also highlights Apple's plan to offer a new API for 'accessibility developers' in 'approved apps' to access the passthrough view. This API is intended to facilitate 'live, person-to-person assistance for visual interpretation,' with Be My Eyes cited as an initial partner.

Our take: This move by Apple significantly elevates the Vision Pro's utility beyond entertainment and productivity, cementing its role as a genuinely assistive technology. While competitors like Meta's Horizon OS and Google's Android XR offer more open access to passthrough camera APIs for general applications, Apple's targeted approach for accessibility-specific apps, initially, suggests a deliberate commitment to privacy and controlled deployment. This focused integration of on-device AI for accessibility could set a new benchmark for mixed reality platforms, demonstrating a powerful use case that directly impacts quality of life rather than just convenience. We will be closely watching WWDC25 for further details on these features and any expanded passthrough API access.

Source: UploadVR ↗

Share this story