Manufacturer News · Snap

Snap's First Spectacles Developer Bootcamp: Shaping the Future of AR

Snap recently hosted its inaugural Spectacles Developer Bootcamp, bringing together 45 top developers in Santa Monica. The event provided an in-depth look at SnapOS, sparse mapping, and AI-native Lens development, fostering collaboration and technical advancement for the AR.

A group of people wearing smart glasses looking at a screen.

This week, Snap brought together 45 developers for its first-ever Spectacles Developer Bootcamp at its Santa Monica headquarters. The event focused on advancing the augmented reality (AR) experiences possible with Spectacles, with developers collaborating over the past year on ambitious projects, sharing insights on platforms like Discord and Reddit.

The Bootcamp served as a direct engagement opportunity for the Spectacles engineering teams and the developer community. Snap stated that the gathering was a "meaningful investment," designed to share technical expertise, exchange ideas, and enable the creation of more sophisticated AR experiences. Attendees traveled from various global locations, including Zambia, Sweden, and Belgium.

The agenda was structured around developer feedback, prioritizing direct access to engineers, in-depth tool discussions, and a forward-looking view of the platform. Key sessions included early insights into the future of SnapOS and its accompanying developer tools.

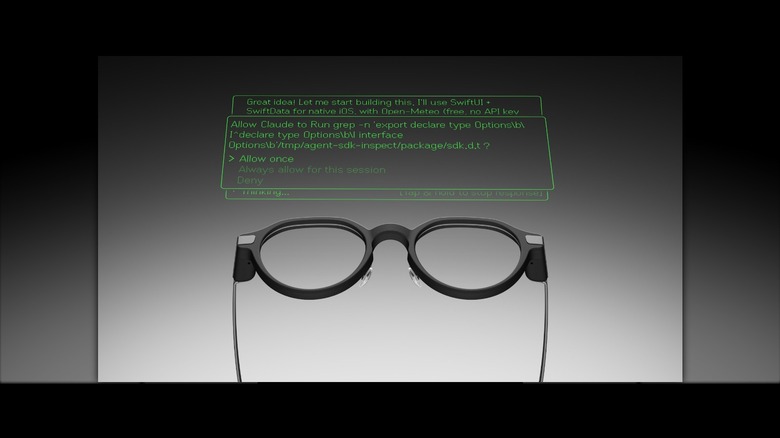

Technical deep-dives covered sparse mapping, explaining how Spectacles interprets and memorizes physical spaces, and guiding developers on designing Lenses that leverage this capability. Another significant segment focused on AI-native Lens development, featuring a session on Agent Manager and hands-on demonstrations of AI tools integrated into the Spectacles build process.

Practical aspects such as spatial UI and performance optimization were also addressed. This included techniques like decimation and shader optimization to ensure Lenses perform well and look visually appealing on Spectacles. Developers also received guidance on utilizing Snap Cloud, specifically Supabase, for persistent AR experiences, and a comprehensive guide to maximizing the SIK and UI Kit.

Source : Snap Newsroom

Share this story