Analysis · Apple

AirPods With Cameras Are Apple’s Real Meta-Killer, Not Vision Pro

Bloomberg reports camera-equipped AirPods are in final testing, gated only by the next-gen Siri. By embedding AI vision into a device people actually wear, Apple is about to detonate Meta's AI glasses lead from the flank.

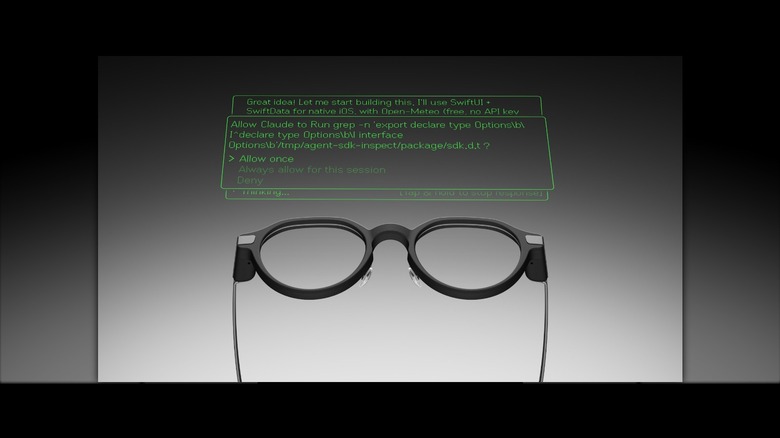

Illustration: Smart Glasses Daily

Forget the Vision Pro. While pundits fixate on Apple’s spatial computing moonshot, the company’s real Trojan horse for mainstream augmented reality is hiding in plain sight: your ears. According to Bloomberg's Mark Gurman, Apple is in the final testing stages for AirPods equipped with low-resolution cameras, a product designed not for photos, but for an always-on, AI-driven view of your world. The hardware is nearly done; the only thing holding it back is a Siri smart enough to understand what it's seeing.

This isn't just another product line extension. This is a category-defining ambush. While Meta has spent years trying to convince people to put a computer on their face, Apple is preparing to put computer vision into a device that ~75 million people already buy annually without a second thought. The social friction for smart glasses remains immense, but for earbuds? It's zero. There is no behavioral change required.

The details from the leak, first reported by Frandroid, paint a picture of classic Apple pragmatism. The design is an iteration of the AirPods Pro, with slightly elongated stems to house cameras and an LED indicator light for privacy. Crucially, these aren't 12-megapixel sensors for your Instagram feed; they are low-res inputs for 'Visual Intelligence,' Apple's name for its multimodal AI. This is about contextual awareness, not content creation.

Let’s do the math that should be keeping Mark Zuckerberg up at night. Meta celebrated selling around two million Ray-Ban Meta glasses through 2024, a respectable number for a new category. Apple, however, ships an estimated 75 million AirPods per year. By upgrading a device that is already the default accessory for its two billion active iPhones, Apple can achieve a scale of 'AI on your body' in a single product cycle that Meta can only dream of.

This difference in go-to-market strategy is everything. The Ray-Ban Meta asks you to adopt a new wearable with new social rules. The 'AirPods Ultra'—or whatever Apple calls them—will simply be the best AirPods you can buy. The killer feature won't be the camera itself, but the seamless integration with a life you already manage on your iPhone, from checking your shopping list by glancing at a product to getting visual walking directions whispered in your ear.

Of course, there is a massive bottleneck: Siri. Apple's beleaguered assistant is the long pole in the tent, and the reported 2027 launch window underscores the sheer difficulty of the task. The hardware is a solved problem, but for these AirPods to be anything more than a gimmick, Apple Intelligence needs to deliver a quantum leap in conversational and environmental understanding. If the new Siri can’t reliably tell a tomato from a traffic light, the entire product is dead on arrival.

Compared to Meta AI, Apple's ambition is grander and far more integrated. Meta’s current assistant on the Ray-Bans is a clever party trick, but it often feels like a beta test bolted onto a pair of glasses, with noticeable latency and a reliance on the cloud. Apple is building for a private, on-device-first experience where the AI has deep context from your calendar, messages, and Keychain, a moat Meta, with its tarnished privacy reputation, simply cannot cross.

The ripple effect of this move will slam into the entire industry. Samsung, which has been teasing its 'Galaxy Glasses' for years, now risks being flanked before it even enters the market. Google's amorphous 'Android XR' strategy, in partnership with Qualcomm and others, has been predicated on the assumption that glasses are the next frontier. Apple is effectively arguing the next form factor is the one that's already here, just waiting for a brain.

However, Apple's gambit is not without significant risk. The primary counter-argument is the form factor itself. An earbud camera offers a 'chin-cam' view of the world—downward, partial, and oblique. How useful is that perspective for identifying objects you're looking directly at? This is a fundamental ergonomics and computer vision challenge that Apple must have a clever solution for.

Then there's the inevitable privacy backlash. Apple is smart to focus on low-res, non-shareable data and an indicator light, learning directly from the 'Glasshole' debacle. But a camera is a camera, and the optics of a device that can perpetually 'see' your surroundings will trigger a firestorm. Apple's privacy-first marketing will be tested like never before.

Finally, Apple is betting the farm on its ability to fix Siri, an assistant that has been slipping deadlines and under-delivering for a decade. The fact that a physical product is being held back by a software roadmap is a testament to the challenge. Any further delays to 'Apple Intelligence' pushes the entire wearable AI vision into the distant future, giving rivals a precious window to react.

So what should Meta be doing right now? Panic would be a good start. The Ray-Ban Meta's camera-first, display-later strategy is now facing an existential threat. Meta must accelerate its roadmap for Project Hypernova, the version with a heads-up display, and get it to market. A display, even a simple one, creates a feature moat, offering visual feedback that an audio-only device cannot match. Meta's brief, uncontested lead in AI wearables is about to end, and its only hope is to outrun Apple by building a true AR device before Apple normalizes the AI hearable.

Share this story