Analysis · —

2026's Smart Glasses: Still Selling a Solution to the Wrong Problem

Tech giants are all-in on AI assistants and subtle form factors, but they continue to miss the core utility for everyday users. The screen, or lack thereof, remains the fundamental misunderstanding driving market stagnation.

concept art of a person wearing sleek, futuristic smart glasses with subtle, translucent UI elements visible in front of their eyes, interacting with the real world.

The smart glass narrative is a recurring broken record. Despite proclamations that the debate over facial computers is "over"—a claim often leveraging Meta's Ray-Ban success as proof—the fundamental product remains misaligned with genuine user needs. Everyone is racing to jam an AI co-pilot into a sleek frame, but few are seriously considering *what* that AI should be doing, or *how* it should present information, that truly enhances daily life beyond a gimmick.

This obsession with AI-first, screen-last — or even screen-less — approaches is especially perplexing. Apple, reportedly gearing up for a late 2026 launch, is focusing heavily on AI-driven features and iPhone integration, deliberately sidestepping a "full augmented reality experience." Huawei, too, enters the fray with HarmonyOS-powered specs emphasizing real-time translation and proprietary AI. These are sophisticated audio wearables with cameras, not true smart glasses.

Ray-Ban Meta, for all its undeniable success in normalizing face-computers and integrating prescriptions with models like the Blayzer and Scriber Optics, fundamentally offers a screen-less experience. They've nailed the 'wearability' and 'style' aspects, proving people *will* put tech on their face if it looks good. But the actual 'smart' part—the visual augmentation that defines the category—is missing, relegated to an auditory prompt or a captured image.

This oversight is not just a semantic quibble; it's a strategic blunder. As "The Silent Screen War" pointed out, a smart glass without a persistent, immersive visual layer is not a smart glass. It's an audio device with a camera. While everyone's obsessing over whether their frames can run an LLM or integrate Siri, the true battleground for utility, the goddamn screen, is being ignored by the most prominent players.

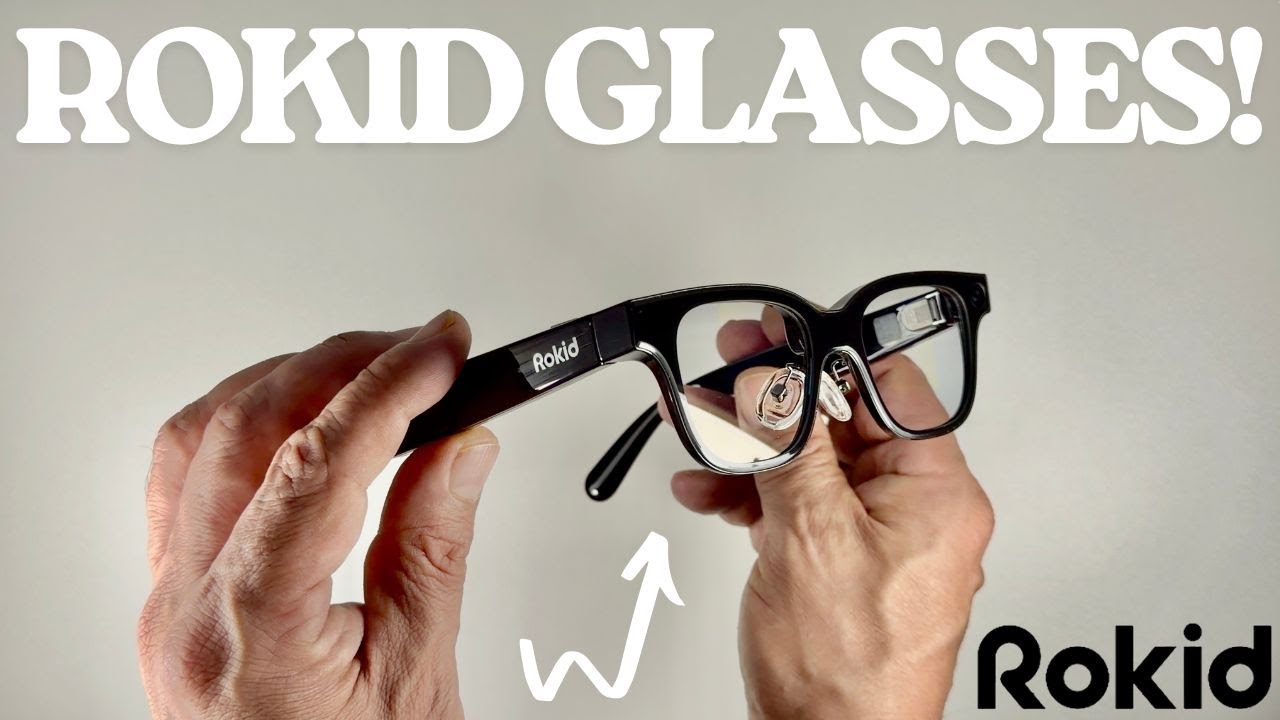

Meanwhile, companies like XREAL, Rokid, and RayNeo are quietly dominating the one factor that genuinely matters for AR adoption: the display. Rokid, in particular, has seen surprising success, reportedly surpassing Meta in unit sales by offering powerful, lightweight AI glasses *with* integrated displays and an open ecosystem that supports multiple AI assistants. They understand that AI is a feature, not the entire product.

Viture's 'Beast' XR Glasses further underscore this point, boasting IMAX-sized visuals on the go with Sony's Micro-OLED panels. These devices prioritize the *visual* experience, delivering immersive, large-screen content directly to the user's eyes. This is not just about entertainment; it's about making information perceptible in a way that AI-first, screen-less devices cannot.

The market seems bifurcated: on one hand, stylish camera-and-mic frames like Ray-Ban Meta are selling well because they address social acceptability and basic photo/audio capture. On the other hand, a quiet rebellion of actual AR glasses, like those from Rokid and Viture, are proving that when you deliver a compelling visual experience, users respond.

The persistent narrative from the tech giants suggests that the future is about an "always-on AI assistant that will live on your face and mediate your reality." But what reality are we mediating if it's purely auditory? How do crucial, context-dependent insights get delivered without a visible interface?

Consider the potential for real-time translation or information overlays. Huawei promises real-time translation, but how is that delivered without a meaningful visual component? A voice in your ear saying, "They said, 'Hello'" is less impactful than seeing "Hola" with a translation overlay on a foreign sign.

The focus on computational specs and AI functionality, while important, risks building sophisticated brains without a sensory nervous system that truly leverages the 'glasses' form factor. We're getting increasingly powerful "ghosts in the machine" but no compelling visual manifestation of their intelligence within our line of sight.

Even as Snap reportedly nears a consumer launch for its $3 billion AR glasses bet, the core question remains: what visual experience will they deliver? If it's merely another camera and audio device, then it's another missed opportunity to truly augment the user's visual perception of the world.

The Homeland Security's "ICE Glasses," which aim to identify individuals in real-time using biometric data via a heads-up display, ironically highlight what enterprise users demand: actionable, in-situ visual information. Everyday users, perhaps less for surveillance and more for utility, are also looking for this direct visual feedback, not just ambient AI noise.

Until the Apples and Metas of the world prioritize visual augmentation—a persistent, integrated display that blends digital information seamlessly with the real world—they will continue to misinterpret what everyday users truly want from smart glasses. The screen is not a secondary feature; it's the defining element.

The race to own the 'always-on AI assistant' is a worthy pursuit, but it's fundamentally disconnected from the form factor if it doesn't manifest visually. 2026, it seems, will still be a year where smart glasses largely get it wrong, mistaking an auditory ghost for a truly augmented reality.

Share this story